arXiv:adap-org/9806001v1

exhentai.org 时间:2021-04-06 阅读:()

3Jun1998NeuralNetworkDesignforJFunctionApproximationinDynamicProgrammingXiaozhongPangPaulJ.

WerbosAbstractThispaperwillshowthatanewneuralnetworkdesigncansolveanexampleofdicultfunctionapproximationproblemswhicharecrucialtotheeldofapproximatedynamicprogramming(ADP).

Althoughconven-tionalneuralnetworkshavebeenproventoapproximatesmoothfunctionsverywell,theuseofADPforproblemsofintelligentcontrolorplanningrequirestheapproximationoffunctionswhicharenotsosmooth.

Asanexample,thispaperstudiestheproblemofapproximatingtheJfunctionofdynamicprogrammingappliedtothetaskofnavigatingmazesingen-eralwithouttheneedtolearneachindividualmaze.

Conventionalneuralnetworks,likemulti-layerperceptrons(MLPs),cannotlearnthistask.

Butanewtypeofneuralnetworks,simultaneousrecurrentnetworks(SRNs),candosoasdemonstratedbysuccessfulinitialtests.

Thepaperalsoex-aminestheabilityofrecurrentneuralnetworkstoapproximateMLPsandviceversa.

Keywords:Simultaneousrecurrentnetworks(SRNs),multi-layerpercep-trons(MLPs),approximatedynamicprogramming,mazenavigation,neuralnet-works.

1Introduction1.

1PurposeThispaperhasthreegoals:First,todemonstratethevalueofanewclassofneuralnetworkwhichpro-videsacrucialcomponentneededforbrain-likeintelligentcontrolsystemsforthefuture.

Second,todemonstratethatthisnewkindofneuralnetworkprovidesbetterfunctionapproximateabilityforuseinmoreordinarykindsofneuralnetworkapplicationsforsupervisedlearning.

Third,todemonstratesomepracticalimplementationtechniquesnecessarytomakethiskindofnetworkactuallyworkinpractice.

X(t)Y(t)SupervisedLearningSystemActualY(t)^Figure1:Whatissupervisedlearning1.

2BackgroundAtpresent,intheneuralnetworkeldperhaps90%ofneuralnetworkapplica-tionsinvolvetheuseofneuralnetworksdesignedtoperformanceataskcalledsupervisedlearning(Figure1).

Supervisedlearningisthetaskoflearninganon-linearfunctionwhichmayhaveseveralinputsandseveraloutputsbasedonsomeexamplesofthefunction.

Forexample,incharacterrecognition,theinputsmaybeanarrayofpixelsseenfromacamera.

Thedesiredoutputsofthenetworkmaybeaclassicationofcharacterbeingseen.

Anotherexamplewouldbeforintelligentsensinginthechemicalindustrywheretheinputsmightbespectraldatafromobservingabatchofchemicals,andthedesiredoutputswouldbetheconcentrationsofthedierentchemicalsinthebatch.

Thepurposeofthisapplicationistopredictorestimatewhatisinthebatchwithouttheneedforexpensiveanalyticaltests.

Theworkinthispaperwillfocustotallyoncertaintasksinsupervisedlearning.

Eventhoughexistingneuralnetworkscanbeusedinsupervisedlearn-ing,therecanbeperformanceproblemsdependingonwhatkindoffunctionislearned.

Manypeoplehaveprovenmanytheoremstoshowthatneuralnetworks,fuzzylogic,Taylortheoriesandotherfunctionapproximationhaveauniversalabilitytoapproximatefunctionsontheconditionthatthefunctionshavecertainpropertiesandthatthereisnolimitonthecomplexityoftheapproximation.

Inpractice,manyapproximationschemesbecomeuselesswhentherearemanyinputvariablesbecausetherequiredcomplexitygrowsatanexponentialrate.

Forexample,onewaytoapproximateafunctionwouldbetoconstructatableofthevaluesofthefunctionatcertainpointsinthespaceofpossibleinputs.

Supposethereare30inputvariablesandweconsider10possiblevaluesofeachinput.

Inthatcase,thetablemusthave1030numbersinit.

Thisisnotusefulinpracticeformanyreasons.

Actually,however,manypopular2approximationmethodslikeradialbasisfunction(RBF)aresimilarinspirittoatableofvalues.

Intheeldofsupervisedlearning,AndrewBarron[30]hasprovedsomefunc-tionapproximationtheoremswhicharemuchmoreusefulinpractice.

Hehasproventhatthemostpopularformofneuralnetworks,themulti-layerpercep-tron(MLP),canapproximateanysmoothfunction.

Unlikethecasewiththelinearbasisfunctions(likeRBFandTaylorseries),thecomplexityofthenet-workdoesnotgrowrapidlyasthenumberofinputvariablesgrows.

Unfortunatelytherearemanypracticalapplicationswherethefunctionstobeapproximatedarenotsmooth.

Insomecases,itisgoodenoughjusttoaddextralayerstoanMLP[1]ortouseageneralizedMLP[2].

However,therearesomedicultproblemswhichariseineldslikeintelligentcontrolorimageprocessingorevenstochasticsearchwherefeed-forwardnetworksdonotappearpowerfulenough.

1.

3SummaryandOrganizationofThisPaperThemaingoalofthispaperistodemonstratethecapabilityofadierentkindofsupervisedlearningsystembasedonakindofrecurrentnetworkcalledsimultaneousrecurrentnetwork(SRN).

Inthenextchapterwewillexplainwhythiskindofimprovedsupervisedlearningsystemwillbeveryimportanttointelligentcontrolandtoapproximatedynamicprogramming.

Ineectthisworkonsupervisedlearningistherststepinamulti-stepeorttobuildmorebrain-likeintelligentsystems.

ThenextstepwouldbetoapplytheSRNtostaticoptimizationproblems,andthentointegratetheSRNsintolargesystemsforADP.

Eventhoughintelligentcontrolisthemainmotivationforthiswork,theworkmaybeusefulforotherareasaswell.

Forexample,inzipcoderecog-nition,AT&T[3]hasdemonstratedthatfeed-forwardnetworkscanachieveahighlevelofaccuracyinclassifyingindividualdigits.

However,AT&Tandtheothersstillhavedicultyinsegmentingthetotalzipcodesintoindividualdig-its.

ResearchonhumanvisionbyvonderMalsburg[4]andothershassuggestedthatsomekindsofrecurrencyinneuralnetworksarecrucialtotheirabilitiesinimagesegmentationandbinocularvision.

Furthermore,researchersinimageprocessinglikeLaveenKanalhaveshowedthatiterativerelaxationalgorithmsarenecessaryeventoachievemoderatesuccessinsuchimageprocessingtasks.

ConceptuallytheSRNcanlearnanoptimaliterativealgorithm,buttheMLPcannotrepresentanyiterativealgorithms.

Insummary,thoughwearemostinterestedinbrain-likeintelligentcontrol,thedevelopmentofSRNscouldleadtoveryimportantapplicationsinareassuchasimageprocessinginthefuture.

Thenetworkdescribedinthispaperisuniqueinseveralrespects.

However,itiscertainlynottherstserioususeofarecurrentneuralnetwork.

Chapter3ofthispaperwilldescribetheexistingliteratureonrecurrentnetworks.

Itwilldescribetherelationshipbetweenthisnewdesignandotherdesignsinthe3literature.

Roughlyspeaking,thevastbulkofresearchinrecurrentnetworkshasbeenacademicresearchusingdesignsbasedonordinarydierentialequa-tions(ODE)toperformsometasksverydierentfromsupervisedlearning—taskslikeclustering,associativememoryandfeatureextraction.

ThesimpleHebbianlearningmethods[13]usedforthosetasksdonotleadtothebestper-formanceinsupervisedlearning.

Manyengineershaveusedanothertypeofrecurrentnetwork,thetimelaggedrecurrentnetwork(TLRN),wheretherecur-rencyisusedtoprovidememoryofpasttimeperiodsforuseinforecastingthefuture.

However,thatkindofrecurrencycannotprovidetheiterativeanalysiscapabilitymentionedabove.

VeryfewresearchershavewrittenaboutSRNs,atypeofrecurrentnetworkdesignedtominimizeerrorandlearnanoptimaliterativeapproximationtoafunction.

ThisiscertainlytherstuseofSRNstolearnaJfunctionfromdynamicprogrammingwhichwillbeexplainedmoreinchapter2.

ThismayalsobetherstempiricaldemonstrationoftheneedforadvancedtrainingmethodstopermitSRNstolearndicultfunctions.

Chapter4willexplaininmoredetailthetwotestproblemswehaveusedfortheSRNandtheMLP,aswellasthedetailsofarchitectureandlearningprocedure.

ThersttestproblemwasusedmainlyasaninitialtestofasimpleformofSRNs.

Inthisproblem,wetriedtotestthehypothesisthatanSRNcanalwayslearntoapproximatearandomlychosenMLP,butnotviceversa.

Althoughourresultsareconsistentwiththathypothesis,thereisroomformoreextensiveworkinthefuture,suchasexperimentswithdierentsizesofneuralnetworksandmorecomplexstatisticalanalysis.

ThemaintestprobleminthisworkwastheproblemoflearningtheJfunctionofdynamicprogramming.

Foramazenavigationproblem,manyneuralnetworkresearchershavewrittenaboutneuralnetworkswhichlearnanoptimalpolicyofactionforoneparticularmaze[5].

ThispaperwilladdressthemoredicultproblemoftraininganeuralnetworktoinputapictureofamazeandoutputtheJfunctionforthismaze.

WhentheJfunctionisknown,itisatriviallocalcalculationtondthebestdirectionofmovement.

Thiskindofneuralnetworkshouldnotrequireretrainingwheneveranewmazeisencountered.

Insteaditshouldbeabletolookatthemazeandimmediately"see"theoptimalstrategy.

Trainingsuchanetworkisaverydicultproblemwhichhasneverbeensolvedinthepastwithanykindofneuralnetwork.

Alsoitistypicalofthechallengesoneencountersintrueintelligentcontrolandplanning.

Thispaperhasdemonstratedaworkingsolutiontothisproblemforthersttime.

Nowthatasystemisworkingonaverysimpleformforthisproblem,itwouldbepossibleinthefuturetoperformmanytestsoftheabilityofthissystemtogeneralizeitssuccesstomanymazes.

Inordertosolvethemazeproblem,itwasnotsucientonlytouseanSRN.

TherearemanychoicestomakewhenimplementingthegeneralideaofSRNsorMLPs.

Chapter5willdescribeindetailhowthesechoicesweremadeinthiswork.

Themostimportantchoiceswere:41.

BothfortheMLPandforthefeed-forwardcoreoftheSRNweusedthegeneralizedMLPdesign[2]whicheliminatestheneedtodecideonthenumberoflayers.

2.

Forthemazeproblem,weusedacellularorweight-sharingarchitecturewhichexploitsthespatialsymmetryoftheproblemandreducesdramaticallythenumberofweights.

Ineectwesolvedthemazeproblemusingonlyvedistinctneurons.

Thereareinterestingparallelsbetweenthisnetworkandthehippocampusofthehumanbrain.

3.

Forthemazeproblem,anadaptivelearningrate(ALR)procedurewasusedtopreventoscillationandensureconvergence.

4.

InitialvaluesfortheweightsandtheinitialinputvectorfortheSRNwerechosenessentiallyatrandom,byhand.

Inthefuture,moresystematicmethodsareavailable.

Butthiswassucientforsuccessinthiscase.

FinallyChapter6willdiscussthesimulationresultsinmoredetail,givetheconclusionsofthispaperandmentionsomepossibilitiesforfuturework.

2MotivationInthischapterwewillexplaintheimportanceofthiswork.

Asdiscussedabove,thepapershowshowtouseanewtypeofneuralnetworkinordertoachievebetterfunctionapproximationthanwhatisavailablefromthetypesofneu-ralnetworkswhicharepopulartoday.

Thischapterwilltrytoexplainwhybetterfunctionapproximationisimportanttoapproximatedynamicprogram-ming(ADP),intelligentcontrolandunderstandingthebrain.

ImageprocessingandotherapplicationshavealreadybeendiscussedintheIntroduction.

Thesethreetopics—ADP,intelligentcontrolandunderstandingthebrain—areallcloselyrelatedtoeachotherandprovidetheoriginalmotivationfortheworkofthispaper.

Thepurposeofthispaperistomakeacorecontributiontodevelopingthemostpowerfulpossiblesystemforintelligentcontrol.

Inordertobuildthebestintelligentcontrolsystems,weneedtocombinethemostsuitablemathematicstogetherwithsomeunderstandingofnaturalintelligenceinthebrain.

Thereisalotofinterestinintelligentcontrolintheworld.

Somecontrolsystemswhicharecalledintelligentareactuallyveryquickandeasythings.

Therearemanypeoplewhotrytomovestepbysteptoaddintelligenceintocontrol,butastep-by-stepapproachmaynotbeenoughbyitself.

Sometimestoachieveacomplexdicultgoal,itisnecessarytohaveaplan,thussomepartsoftheintelligentcontrolcommunityhavedevelopedamoresystematicvisionorplanforhowitcouldbepossibletoachieverealintelligentcontrol.

First,onemustthinkaboutthequestionofwhatisintelligentcontrol.

Then,insteadoftryingtoanswerthisquestioninonestep,wetrytodevelopaplantoreachthedesign.

Actuallytherearetwoquestions:51.

Howcouldwebuildanarticialsystemwhichreplicatesthemaincapa-bilitiesofbrain-likeintelligence,somehowuniedtogetherastheyareuniedtogetherinthebrain2.

Howcanweunderstandwhatarethecapabilitiesinthebrainandhowtheyareorganizedinafunctionalengineeringviewi.

e.

howarethosecircuitsinthehumanbrainarrangedtolearnhowtoperformdierenttasksItwouldbebesttounderstandhowthehumanbrainworksbeforebuildinganarticialsystem.

However,atthepresenttime,ourunderstandingofthebrainislimited.

Butatleastweknowthatlocalrecurrencyplayscriticalruleinthehigherpartofthehumanbrain[6][7][8][4].

AnotherreasontouseSRNsisthatSRNscanbeveryusefulinADPmath-ematically.

NowwewilldiscusswhatADPcandoforintelligentcontrolandunderstandingthebrain.

Theremainderofthischapterwilladdressthreequestionsinorder:1.

WhatisADP2.

WhatistheimportanceofADPtointelligentcontrolandunderstandingthebrain3.

WhatistheimportanceofSRNstoADP2.

1WhatisADPandJFunctionToexplainwhatisADP,letusconsidertheoriginalBellmanequation[9]:J(R(t))=maxu(t)(U(R(t),u(t))+)/(1+r)U0(1)whererandu0areconstantsthatareusedonlyininnite-time-horizonproblemsandthenonlysometimes,andwheretheanglebracketsrefertoexpectationvalue.

Inthispaperweactuallyuse:J(R(t))=maxu(t)(U(R(t),u(t))+)(2)sincethemazeproblemdonotinvolveaninnitetime-horizon.

InsteadofsolvingforthevalueofJineverypossiblestate,R(t),wecanuseafunctionapproximationmethodlikeneuralnetworkstoapproximatetheJfunction.

Thisiscalledapproximatedynamicprogramming(ADP).

ThispaperisnotdoingtrueADPbecauseintrueADPwedonotknowwhattheJfunctionisandmustthereforeuseindirectmethodstoapproximateit.

However,beforewetrytouseSRNsasacomponentofanADPsystem,itmakessensetorsttesttheabilityofanSRNtoapproximateaJfunction,inprinciple.

NowwewilltrytoexplainwhatistheintuitivemeaningoftheBellmanequation(Equation(1))andtheJfunctionaccordingtothetreatmenttakenfrom[2].

TounderstandADP,onemustrstreviewthebasicsofclassicaldynamicprogramming,especiallytheversionsdevelopedbyHoward[28]andBertsekas.

6Classicaldynamicprogrammingistheonlyexactandecientmethodtocom-putetheoptimalcontrolpolicyovertime,inageneralnonlinearstochasticenvi-ronment.

Theonlyreasontoapproximateitistoreducecomputationalcost,soastomakethemethodaordable(feasible)acrossawiderangeofapplications.

Indynamicprogramming,theusersuppliesautilityfunctionwhichmaytaketheformU(R(t),u(t))—wherethevectorRisaRepresentationorestimateofthestateoftheenvironment(i.

e.

thestatevector)—andastochasticmodeloftheplantorenvironment.

Then"dynamicprogramming"(i.

e.

solutionoftheBellmanequation)givesusbackasecondaryorstrategicutilityfunctionJ(R).

ThebasictheoremisthatmaximizingU(R(t),u(t))+J(R(t+1))yieldstheoptimalstrategy,thepolicywhichwillmaximizetheexpectedvalueofUaddedupoverallfuturetime.

Thusdynamicprogrammingconvertsadicultprob-leminoptimizingovermanytimeintervalsintoastraightforwardprobleminshort-termmaximization.

Inclassicaldynamicprogramming,wendtheexactfunctionJwhichexactlysolvestheBellmanequation.

InADP,welearnakindof"model"ofthefunctionJ;this"model"iscalleda"Critic.

"(Alternatively,somemethodslearnamodelofthederivativesofJwithrespecttothevariablesRi;thesecorrespondtoLagrangemultipliers,λi,andtothe"pricevariables"ofmicroeconomictheory.

SomemethodslearnafunctionrelatedtoJ,asintheAction-DependentAdaptiveCritic(ADAC)[29].

2.

2IntelligentControlandRobustControlTounderstandthehumanbrainscientically,wemusthavesomesuitablemath-ematicalconcepts.

Sincethehumanbrainmakesdecisionslikeacontrolsystem,itisanexampleofanintelligentcontrolsystem.

Neuroscientistsdonotyetun-derstandthegeneralabilityofthehumanbraintolearntoperformnewtasksandsolvenewproblemseventhoughtheyhavestudiedthebrainfordecades.

Somepeoplecomparethepastresearchinthiseldtowhatwouldhappenifwespentyearstostudyradioswithoutknowingthemathematicsofsignalprocessing.

Werstneedsomemathematicalideasofhowitispossibleforacomputingsystemtohavethiskindofcapabilitybasedondistributedparallelcomputation.

Thenwemustaskwhatarethemostimportantabilitiesofthehumanbrainwhichunifyallofitsmorespecicabilitiesinspecictasks.

Itwouldbeseenthatthemostimportantabilityofbrainistheabilitytolearnovertimehowtomakebetterdecisionsinordertobettermaximizethegoalsoftheorganism.

Thenaturalwaytoimitatethiscapabilityinengineeringsystemsistobuildsystemswhichlearnovertimehowtomakedecisionswhichmaximizesomemeasureofsuccessorutilityoverfuturetime.

Inthiscontext,dynamicprogrammingisimportantbecauseitistheonlyexactandecientmethodformaximizingutilityoverfuturetime.

Inthegeneralsituation,whererandomdisturbancesandnonlinearityareexpected,ADPisimportantbecauseitprovidesboththelearningcapabilityandthepossibilityofreducingcomputationalcosttoan7aordablelevel.

Forthisreason,ADPistheonlyapproachwehavetoimitatingthiskindofabilityofthebrain.

ThesimilaritybetweensomeADPdesignsandthecircuitryofthebrainhasbeendiscussedatlengthin[10]and[11].

Forexample,thereisanimportantstructureinthebraincalledthelimbicsystemwhichperformssomekindsofevaluationorreinforcementfunctions,verysimilartothefunctionsoftheneuralnetworksthatmustapproximatetheJfunctionofdynamicprogramming.

Thelargestpartofthelimbicsystem,calledthehippocampus,isknowntopossessahigherdegreeoflocalrecurrency[8].

Ingeneral,therearetwowaystomakeclassicalcontrollersstabledespitegreatuncertaintyaboutparametersoftheplanttobecontrolled.

Forexample,incontrollingahighspeedaircraft,thelocationofthecenterofthegravityisnotknown.

Thecenterofgravityisnotknownexactlybecauseitdependsonthecargooftheairplaneandthelocationofthepassengers.

Onewaytoaccountforsuchuncertaintiesistouseadaptivecontrolmethods.

Wecangetsimilarresults,butmoreassuranceofstabilityinmostcases[16]byusingrelatedneuralnetworkmethods,suchasadaptivecriticswithrecurrentnetworks.

Itislikeadaptivecontrolbutmoregeneral.

ThereisanotherapproachcalledrobustcontrolorH∞control,whichtrystodesignaxedcontrollerwhichremainsstableoveralargerangeinparameterspace.

BarasandPatel[31]haveforthersttimesolvedthegeneralproblemofH∞controlforgeneralpartiallyobservednonlinearplants.

Theyhaveshownthatthisproblemreducestoaprobleminnonlinear,stochasticoptimization.

Adaptivedynamicprogrammingmakesitpossibletosolvelargescaleproblemsofthistype.

2.

3ImportanceoftheSRNtoADPADPsystemsalreadyexistwhichperformrelativelysimplecontroltaskslikestabilizinganaircraftasitlandsunderwindyconditions[12].

Howeverthiskindoftaskdoesnotreallyrepresentthehighestlevelofintelligenceorplanning.

Trueintelligentcontrolrequirestheabilitytomakedecisionswhenfuturetimeperiodswillfollowacomplicated,unknownpathstartingfromtheinitialstate.

Oneexampleofachallengeforintelligentcontrolistheproblemofnavigatingamazewhichwewilldiscussinchapter4.

Atrueintelligentcontrolsystemshouldbeabletolearnthiskindoftask.

However,theADPsystemsinusetodaycouldneverlearnthiskindoftask.

TheyuseconventionalneuralnetworkstoapproximatetheJfunction.

BecausetheconventionalMLPcannotapproximatesuchaJfunction,wemaydeducethatADPsystemconstructedonlyfromMLPswillneverbeabletodisplaythiskindofintelligentcontrol.

Therefore,itisessentialthatwecanndakindofneuralnetworkwhichcanperformthiskindoftask.

Aswewillshow,theSRNcanllthiscrucialgap.

ThereareadditionalreasonsforbelievingthattheSRNmaybecrucialtointelligentcontrolasdiscussedinchapter13of[9].

83AlternativeFormsofRecurrentNetworks3.

1RecurrentNetworksinGeneralThereisahugeliteratureonrecurrentnetworks.

Biologistshaveusedmanyrecurrentmodelsbecausetheexistenceofrecurrencyinthebrainisobvious.

However,mostoftherecurrentnetworksimplementedsofarhavebeenclas-sicstylerecurrentnetworks,asshownonthelefthandofFigure2.

Mostofthesenetworksareformulatedfromordinarydierentialequation(ODE)sys-tems.

UsuallytheirlearningisbasedonarestrictedconceptofHebbianlearn-ing.

Originallyintheneuralnetworkeld,themostpopularneuralnetworkswererecurrentnetworkslikethosewhichHopeld[14]andGrossberg[15]usedtoprovideassociativememory.

FEATUREEXTRACTIONART,SOM,.

.

.

MINIMIZATIONHOPFIELD,CAUCHYCLASSICALRECURRENTNETWORKSRECURRENTNETWORKSTLRNSRN(DynamicSystems,Prediction)(Betterfunctionapproximation)ASSOCIATIVEMEMORYSTATICFUNCTIONCLUSTERINGHOPFIELD,HASSOUNFigure2:RecurrentnetworksAssociativememorynetworkscanactuallybeappliedtosupervisedlearning.

Butinactualitytheircapabilitiesareverysimilartothoseoflook-uptablesandradialbasisfunctions.

Theymakepredictionsbasedonsimilaritytoprevious9examplesorprototypes.

Theydonotreallytrytoestimategeneralfunctionalrelationships.

Asaresultthesemethodshavebecomeunpopularinpracticalapplicationsofsupervisedlearning.

ThetheoremsofBarrondiscussedintheIntroductionshowthatMLPsdoprovidebetterfunctionapproximationthandosimplemethodsbasedonsimilarity.

Therehasbeensubstantialprogressinthepastfewyearsindevelopingnewassociativememorydesigns.

Nevertheless,theMLPisstillbetterforthespecictaskoffunctionapproximationwhichisthefocusofthispaper.

Inasimilarway,classicrecurrentnetworkshavebeenusedfortaskslikeclustering,featureextractionandstaticfunctionoptimization.

Butthesearedierentproblemsfromwhatwearetryingtosolvehere.

Actuallytheproblemofstaticoptimizationwillbeconsideredinfuturestagesofthisresearch.

WehopethattheSRNcanbeusefulinsuchappli-cationsafterwehaveuseditforsupervisedlearning.

WhenpeopleusetheclassicHopeldnetworksforstaticoptimization,theyspecifyalltheweightsandconnectionsinadvance[14].

Thishaslimitedthesuccessofthiskindofnetworkforlargescaleproblemswhereitisdiculttoguesstheweights.

WiththeSRNwehavemethodstotraintheweightsinthatkindofstructure.

Thustheguessingisnolongerneeded.

However,touseSRNsinthatapplicationrequiresrenementbeyondthescopeofthispaper.

TherehavealsobeenresearchersusingODEneuralnetworkswhohavetriedtousetrainingschemesbasedonaminimizationoferrorinsteadofHebbianapproaches.

However,inpracticalapplicationsofsuchnetworks,itisimportanttoconsidertheclockratesofcomputationanddatasampling.

Forthatreason,itisbotheasierandbettertouseerrorminimizingdesignsbasedondiscretetimeratherthanODE.

3.

2StructureofDiscrete-TimeRecurrentNetworksIftheimportanceofneuralnetworksismeasuredbythenumberofwordspub-lished,thentheclassicnetworksdominatetheeldofrecurrentnetworks.

How-ever,ifthevalueismeasuredbasedoneconomicvalueofpracticalapplication,thentheeldisdominatedbytime-laggedrecurrentnetworks(TLRNs).

ThepurposeoftheTLRNistopredictorclassifytime-varyingsystemsusingre-currencyasawaytoprovidememoryofthepast.

TheSRNhassomerelationwiththeTLRNbutitisdesignedtoperformafundamentallydierenttask.

TheSRNusesrecurrencytorepresentmorecomplexrelationshipsbetweenoneinputvectorX(t)andoneoutputY(t)withoutconsiderationoftheothertimest.

Figure3andFigure4showusmoredetailsabouttheTLRNandtheSRN.

Incontrolapplications,u(t)representsthecontrolvariableswhichweusetocontroltheplant.

Forexample,ifwedesignacontrollerforacarengine,theX(t)variablesarethedatawegetfromoursensors.

Theu(t)variableswouldincludethevalvesettingswhichweusetotrytocontroltheprocessofcombustion.

TheR(t)variablesprovideawayfortheneuralnetworkstorememberpasttime10Z-1X(t)R(t)X(t+1)u(t)TLRNFigure3:Timelaggedrecurrentnetwork(TLRN)XfyFigure4:Simultaneousrecurrentnetwork(SRN)11cycles,andtoimplicitlyestimateimportantvariableswhichcannotbeobserveddirectly.

Infact,theapplicationofTLRNstoautomobilecontrolisthemostvaluableapplicationofrecurrentnetworkseverdevelopedsofar.

Asimultaneousrecurrentnetwork(Figure4)isdenedasamapping:Y(t)=F(X(t),W)(3)whichiscomputedbyiteratingoverthefollowingequation:y(n+1)(t)=f(y(n)(t),X(t),W)(4)wherefissomesortoffeed-forwardnetworkorsystem,andYisdenedas:Y(t)=limn→∞y(n)(t)(5)WhenweuseYinthispaper,weusen=20insteadof∞here.

InFigure4,theoutputsoftheneuralnetworkcomebackagainasinputstothesamenetwork.

However,inconceptthereisnotimedelay.

Theinputsandoutputsshouldbesimultaneous.

Thatiswhyitiscalledasimultaneousrecurrentnetwork(SRN).

Inpractice,ofcourse,therewillalwaysbesomephys-icaltimedelaybetweentheoutputsandtheinputs.

HoweveriftheSRNisimplementedinfastcomputers,thistimedelaymaybeverysmallcomparedtothedelaybetweendierentframesofinputdata.

InFigure4,Xreferstotheinputdataatthecurrenttimeframet.

Thevectoryrepresentsthetemporaryoutputofthenetwork,whichisthenrecycledasanadditionalsetofinputstothenetwork.

AtthecenteroftheSRNisactuallythefeed-forwardnetworkwhichimplementsthefunctionf.

(IndesigninganSRN,youcanchooseanyfeed-forwardnetworkorsystemasyoulike.

Thefunctionfsimplydescribeswhichnetworkyouuse).

TheoutputoftheSRNatanytimetissimplythelimitofthetemporaryoutputy.

InEquation(3)and(4),noticethattherearetwointegers—nandt—whichcouldbothrepresentsomekindoftime.

Theintegertrepresentsaslowerkindoftimecycle,likethedelaybetweenframesofincomingdata.

Theintegernrepresentsafasterkindoftime,likethecomputingcycleofafastelectronicchip.

Forexample,ifwebuildacomputertoanalyzeimagescomingfromamoviecamera,"t"and"t+1"representtwosuccessiveincomingpictureswithamoviecamera.

Thereareusuallyonly32framespersecond.

(Inthehumanbrain,itseemsthatthereareonlyabout10framespersecondcomingintotheneocortex.

)Butifweuseafastneuralnetworkchip,thecomputationalcycle—thetimebetween"n"and"n+1"—couldbeassmallasamicrosecond.

Inactuality,itisnotnecessarytochoosebetweentime-laggedrecurrency(fromttot+1)andsimultaneousrecurrency(fromnton+1).

Itispossibletobuildahybridsystemwhichcontainsbothtypesofrecurrency.

Thiscouldbeveryusefulinanalyzingdatalikemoviepictures,whereweneedbothmemoryandsomeabilitytosegmenttheimages.

[9]discusseshowtobuildsuchahybrid.

12However,beforebuildingsuchahybrid,wemustrstlearntomakeSRNsworkbythemselves.

Finally,pleasenotethattheTLRNisnottheonlykindofneuralnetworkusedinpredictingdynamicalsystems.

Evenmorepopularisthetimedelayedneuralnetwork(TDNN),showninFigure5.

TheTDNNispopularbecauseitiseasytouse.

However,ithaslesscapability,inprinciple,becauseithasnoabilitytoestimateunknownvariables.

Itisespeciallyweakwhensomeofthesevariableschangeslowlyovertimeandrequirememorywhichpersistsoverlongtimeperiods.

Inaddition,theTLRNtstherequirementsofADPdirectly,whiletheTDNNdoesnot[9][16].

X(t)X(t-1)X(t-k)TDNNX(t+1)u(t-k)u(t-1)u(t)Figure5:Timedelayedneuralnetwork(TDNN)3.

3TrainingofSRNsandTLRNsTherearemanytypesoftrainingthathavebeenusedforrecurrentnetworks.

Dierenttypesoftraininggiverisetodierentkindsofcapabilitiesfordierenttasks.

ForthetaskswhichwehavedescribedfortheSRNandtheTLRN,theproperformsoftrainingallinvolvesomecalculationofthederivativesoferrorwithrespectstotheweights.

Usuallyafterthesederivativesareknown,theweightsareadaptedaccordingtoasimpleformulaasfollows:newWi,j=oldWi,jLRErrorWi,j(6)whereLRiscalledthelearningrate.

TherearevemainwaystotrainSRNs,allbasedondierentmethodsforcalculatingorapproximatingthederivatives.

FourofthesemethodscanalsobeusedwithTLRNs.

Somecanbeusedforcontrolapplications.

Butthedetailsofthoseapplicationsarebeyondthescopeofthispaper.

Theseve13typesoftrainingarelistedinFigure6.

Forthispaper,wehaveusedtwoofthesemethods:Backpropagationthroughtime(BTT)andTruncation.

TypesofSRNSimultaneousBackpropagationBackpropagationThroughTimeForwardPropagationTrainingTruncationErrorCriticsFigure6:TypesofSRNTrainingThevemethodsare:1.

Backpropagationthroughtime(BTT).

Thismethodandforwardpropa-gationarethetwomethodswhichcalculatethederivativesexactly.

BTTisalsolessexpensivethanforwardpropagation.

2.

Truncation.

Thisisthesimplestandleastexpensivemethod.

Itusesonlyonesimplepassofbackpropagationthroughthelastiterationofthemodel.

TruncationisprobablythemostpopularmethodusedtoadaptSRNseventhoughthepeoplewhouseitmostlyjustcallitordinarybackpropagation.

3.

Simultaneousbackpropagation.

Thisismorecomplexthantruncation,butitstillcanbeusedinrealtimelearning.

Itcalculatesderivativeswhichareexactintheneighborhoodofequilibriumbutitdoesnotaccountforthedetailsofthenetworkbeforeitreachestheneighborhoodofequilibrium.

4.

Errorcritics(showninFigure7).

ThisprovidesageneralapproximationtoBTTwhichissuitableforuseinreal-timelearning[9].

14TLRNTLRNCriticErrorCriticErrorErrorErrorR(t)X(t)u(t)X(t)u(t)X(t+1)X(t+1)X(t=1)u(t+1)R(t+1)λ^(t)λ^λ(t)(t+1)Figure7:ErrorCritics155.

Forwardpropagation.

This,likeBTT,calculatesexactderivatives.

Itisoftenconsideredsuitableforreal-timelearningbecausethecalculationsgoforwardintime.

However,whentherearenneuronsandmconnections,thecostofthismethodperunitoftimeisproportionaltonm.

Becauseofthishighcost,forwardpropagationisnotreallybrain-likeanymorethanBTT.

3.

3.

1Backpropagationthroughtime(BTT)BTTisageneralmethodforcalculatingallthederivativeofanyoutcomeorresultofaprocesswhichinvolvesrepeatedcallstothesamenetworkornet-worksusedtohelpcalculatesomekindofnaloutcomevariableorresultE.

Insomeapplications,Ecouldrepresentutility,performance,costorothersuchvariables.

Butinthispaper,Ewillbeusedtorepresenterror.

BTTwasrstproposedandimplementedin[17].

ThegeneralformofBTTisasfollows:fork=1toTdoforwardcalculation(k);calculateresultE;calculatedirectderivativesofEwithrespecttooutputsofforwardcalculations;fork=Tto1backpropagatethroughforwardscalculation(k),calculatingrun-ningtotalswhereappropriate.

ThesestepsareillustratedinFigure8.

Noticethatthisalgorithmcanbeappliedtoallkindsofcalculations.

ThuswecanapplyittocaseswherekrepresentsdataframestasintheTLRNs,ortocaseswherekrepresentsinternaliterationsnasintheSRNs.

AlsonotethateachboxofcalculationreceivesinputfromsomedashedlineswhichrepresentthederivativesofEwithrespecttotheoutputofthebox.

Inordertocalculatethederivativescomingoutofeachcalculationbox,onesimplyusesbackpropagationthroughthecalculationofthatboxstartingoutfromtheincomingderivatives.

WewillexplaininmoredetailhowthisworksintheSRNcaseandtheTLRNcase.

SofarasweknowBTThasbeenappliedinpublishedworkingsystemsforTLRNsandforcontrol,butnotyetforSRNsuntilnow.

However,Rumelhart,HintonandWilliams[18]didsuggestthatsomeoneshouldtrythis.

TheapplicationofBTTforTLRNsisdescribedatlengthin[2]and[9].

TheprocedureisillustratedinFigure9.

Inthisexamplethetotalerrorisactuallythesumoferrorovereachtimetwheretgoesfrom1toT.

ThereforetheoutputsoftheTLRNateachtimet(t#include#include#includevoidF_NET2(doubleF_Yhat,doubleW[30][30],doublex[30],intn,intm,intN,doubleF_W[30][30],doubleF_net[30],doubleF_Ws[30],doubleWs,doubleF_x[30]);voidNET(doubleW[30][30],doublex[30],doubleww[30],intn,intm,intN,doubleYhat[30]);41voidsynthesis(intB[30][30],intA[30][30],intn1,intn2);voidpweight(doubleWs,doubleF_Ws_T,doubleww[30],doubleF_net_T[30],doubleW[30][30],doubleF_W_T[30][30],intn,intN,intm);intminimum(ints,intt,intu,intv);intmin(intk,intl);doublef(doublex);voidmain(){inti,j,it,iz,ix,iy,lt,m,maxt,n,n1,n2,nn,nm,N,p,q,po,t,TT;intA[30][30],B[30][30];doublea,b,dot,e,e1,e2,es,mu,s,sum,F_Ws_T,Ws,F_Yhat,wi;doubleW[30][30],x[30],F_net_T[30],F_Ws[30],F_W_O[30][30],F_W[30][30];doubleF_W_T[30][30],F_net[30],ww[30],yy[21][12][8][8];doubleYhat[30],F_y[21][12][8][8],F_x[30],F_Jhat[30][30];doubleS_F_W1,S_F_W2,Lr_W,S_F_net1,S_F_net2,Lr_ww,Lr_Ws,F_Ws_O;doubley[50][50],F_net_O[50],F_Ws1,F_Ws2,W_O[50][50],ww_O[50],Ws_O;FILE*f;/*Numberofinputs,neuronsandoutput:7,3,1*//*'n'isthenumberoftheactiveneurons*//*'m'and'N'botharethenumberofinputs*//*'nm'isthenumberofmemoryis:5*//*'nn+1'*'nn+1'isthesizeofthemaze'*//*'TT'isthenumberoftrials*//*'lt'isthenumberoftheintervaltime*//*'maxt'isthemaxnumberforTinfigure[8]*//*Lr-Ws,Lr_wwandLr_WarethelearningratesforWs,wwandW*/a=0.

9;b=0.

2;n=5;m=11;N=11;nn=6;nm=5;TT=30000;lt=50;maxt=20;wi=25;Ws=40;e=0;po=pow(2,31)-1;/*InitialvaluesofOld*/F_Ws_O=1;for(i=m+1;i-1;q--){for(ix=0;ixS_F_W2)||(S_F_W1==S_F_W2))Lr_W=Lr_W*(a+b);elseif(S_F_W1S_F_net2)||(S_F_net1==S_F_net2))Lr_ww=Lr_ww*(a+b);elseif(S_F_net1F_Ws2)||(F_Ws1==F_Ws2))Lr_Ws=Lr_Ws*(a+b);elseif(F_Ws1m;i--){for(j=i+1;j0;i--)for(j=m+1;jl)r=l;elser=k;returnr;}voidpweight(doubleWs,doubleF_Ws_T,doubleww[30],doubleF_net_T[30],doubleW[30][30],doubleF_W_T[30][30],intn,intN,intm){inti,j;for(i=m+1;i

WerbosAbstractThispaperwillshowthatanewneuralnetworkdesigncansolveanexampleofdicultfunctionapproximationproblemswhicharecrucialtotheeldofapproximatedynamicprogramming(ADP).

Althoughconven-tionalneuralnetworkshavebeenproventoapproximatesmoothfunctionsverywell,theuseofADPforproblemsofintelligentcontrolorplanningrequirestheapproximationoffunctionswhicharenotsosmooth.

Asanexample,thispaperstudiestheproblemofapproximatingtheJfunctionofdynamicprogrammingappliedtothetaskofnavigatingmazesingen-eralwithouttheneedtolearneachindividualmaze.

Conventionalneuralnetworks,likemulti-layerperceptrons(MLPs),cannotlearnthistask.

Butanewtypeofneuralnetworks,simultaneousrecurrentnetworks(SRNs),candosoasdemonstratedbysuccessfulinitialtests.

Thepaperalsoex-aminestheabilityofrecurrentneuralnetworkstoapproximateMLPsandviceversa.

Keywords:Simultaneousrecurrentnetworks(SRNs),multi-layerpercep-trons(MLPs),approximatedynamicprogramming,mazenavigation,neuralnet-works.

1Introduction1.

1PurposeThispaperhasthreegoals:First,todemonstratethevalueofanewclassofneuralnetworkwhichpro-videsacrucialcomponentneededforbrain-likeintelligentcontrolsystemsforthefuture.

Second,todemonstratethatthisnewkindofneuralnetworkprovidesbetterfunctionapproximateabilityforuseinmoreordinarykindsofneuralnetworkapplicationsforsupervisedlearning.

Third,todemonstratesomepracticalimplementationtechniquesnecessarytomakethiskindofnetworkactuallyworkinpractice.

X(t)Y(t)SupervisedLearningSystemActualY(t)^Figure1:Whatissupervisedlearning1.

2BackgroundAtpresent,intheneuralnetworkeldperhaps90%ofneuralnetworkapplica-tionsinvolvetheuseofneuralnetworksdesignedtoperformanceataskcalledsupervisedlearning(Figure1).

Supervisedlearningisthetaskoflearninganon-linearfunctionwhichmayhaveseveralinputsandseveraloutputsbasedonsomeexamplesofthefunction.

Forexample,incharacterrecognition,theinputsmaybeanarrayofpixelsseenfromacamera.

Thedesiredoutputsofthenetworkmaybeaclassicationofcharacterbeingseen.

Anotherexamplewouldbeforintelligentsensinginthechemicalindustrywheretheinputsmightbespectraldatafromobservingabatchofchemicals,andthedesiredoutputswouldbetheconcentrationsofthedierentchemicalsinthebatch.

Thepurposeofthisapplicationistopredictorestimatewhatisinthebatchwithouttheneedforexpensiveanalyticaltests.

Theworkinthispaperwillfocustotallyoncertaintasksinsupervisedlearning.

Eventhoughexistingneuralnetworkscanbeusedinsupervisedlearn-ing,therecanbeperformanceproblemsdependingonwhatkindoffunctionislearned.

Manypeoplehaveprovenmanytheoremstoshowthatneuralnetworks,fuzzylogic,Taylortheoriesandotherfunctionapproximationhaveauniversalabilitytoapproximatefunctionsontheconditionthatthefunctionshavecertainpropertiesandthatthereisnolimitonthecomplexityoftheapproximation.

Inpractice,manyapproximationschemesbecomeuselesswhentherearemanyinputvariablesbecausetherequiredcomplexitygrowsatanexponentialrate.

Forexample,onewaytoapproximateafunctionwouldbetoconstructatableofthevaluesofthefunctionatcertainpointsinthespaceofpossibleinputs.

Supposethereare30inputvariablesandweconsider10possiblevaluesofeachinput.

Inthatcase,thetablemusthave1030numbersinit.

Thisisnotusefulinpracticeformanyreasons.

Actually,however,manypopular2approximationmethodslikeradialbasisfunction(RBF)aresimilarinspirittoatableofvalues.

Intheeldofsupervisedlearning,AndrewBarron[30]hasprovedsomefunc-tionapproximationtheoremswhicharemuchmoreusefulinpractice.

Hehasproventhatthemostpopularformofneuralnetworks,themulti-layerpercep-tron(MLP),canapproximateanysmoothfunction.

Unlikethecasewiththelinearbasisfunctions(likeRBFandTaylorseries),thecomplexityofthenet-workdoesnotgrowrapidlyasthenumberofinputvariablesgrows.

Unfortunatelytherearemanypracticalapplicationswherethefunctionstobeapproximatedarenotsmooth.

Insomecases,itisgoodenoughjusttoaddextralayerstoanMLP[1]ortouseageneralizedMLP[2].

However,therearesomedicultproblemswhichariseineldslikeintelligentcontrolorimageprocessingorevenstochasticsearchwherefeed-forwardnetworksdonotappearpowerfulenough.

1.

3SummaryandOrganizationofThisPaperThemaingoalofthispaperistodemonstratethecapabilityofadierentkindofsupervisedlearningsystembasedonakindofrecurrentnetworkcalledsimultaneousrecurrentnetwork(SRN).

Inthenextchapterwewillexplainwhythiskindofimprovedsupervisedlearningsystemwillbeveryimportanttointelligentcontrolandtoapproximatedynamicprogramming.

Ineectthisworkonsupervisedlearningistherststepinamulti-stepeorttobuildmorebrain-likeintelligentsystems.

ThenextstepwouldbetoapplytheSRNtostaticoptimizationproblems,andthentointegratetheSRNsintolargesystemsforADP.

Eventhoughintelligentcontrolisthemainmotivationforthiswork,theworkmaybeusefulforotherareasaswell.

Forexample,inzipcoderecog-nition,AT&T[3]hasdemonstratedthatfeed-forwardnetworkscanachieveahighlevelofaccuracyinclassifyingindividualdigits.

However,AT&Tandtheothersstillhavedicultyinsegmentingthetotalzipcodesintoindividualdig-its.

ResearchonhumanvisionbyvonderMalsburg[4]andothershassuggestedthatsomekindsofrecurrencyinneuralnetworksarecrucialtotheirabilitiesinimagesegmentationandbinocularvision.

Furthermore,researchersinimageprocessinglikeLaveenKanalhaveshowedthatiterativerelaxationalgorithmsarenecessaryeventoachievemoderatesuccessinsuchimageprocessingtasks.

ConceptuallytheSRNcanlearnanoptimaliterativealgorithm,buttheMLPcannotrepresentanyiterativealgorithms.

Insummary,thoughwearemostinterestedinbrain-likeintelligentcontrol,thedevelopmentofSRNscouldleadtoveryimportantapplicationsinareassuchasimageprocessinginthefuture.

Thenetworkdescribedinthispaperisuniqueinseveralrespects.

However,itiscertainlynottherstserioususeofarecurrentneuralnetwork.

Chapter3ofthispaperwilldescribetheexistingliteratureonrecurrentnetworks.

Itwilldescribetherelationshipbetweenthisnewdesignandotherdesignsinthe3literature.

Roughlyspeaking,thevastbulkofresearchinrecurrentnetworkshasbeenacademicresearchusingdesignsbasedonordinarydierentialequa-tions(ODE)toperformsometasksverydierentfromsupervisedlearning—taskslikeclustering,associativememoryandfeatureextraction.

ThesimpleHebbianlearningmethods[13]usedforthosetasksdonotleadtothebestper-formanceinsupervisedlearning.

Manyengineershaveusedanothertypeofrecurrentnetwork,thetimelaggedrecurrentnetwork(TLRN),wheretherecur-rencyisusedtoprovidememoryofpasttimeperiodsforuseinforecastingthefuture.

However,thatkindofrecurrencycannotprovidetheiterativeanalysiscapabilitymentionedabove.

VeryfewresearchershavewrittenaboutSRNs,atypeofrecurrentnetworkdesignedtominimizeerrorandlearnanoptimaliterativeapproximationtoafunction.

ThisiscertainlytherstuseofSRNstolearnaJfunctionfromdynamicprogrammingwhichwillbeexplainedmoreinchapter2.

ThismayalsobetherstempiricaldemonstrationoftheneedforadvancedtrainingmethodstopermitSRNstolearndicultfunctions.

Chapter4willexplaininmoredetailthetwotestproblemswehaveusedfortheSRNandtheMLP,aswellasthedetailsofarchitectureandlearningprocedure.

ThersttestproblemwasusedmainlyasaninitialtestofasimpleformofSRNs.

Inthisproblem,wetriedtotestthehypothesisthatanSRNcanalwayslearntoapproximatearandomlychosenMLP,butnotviceversa.

Althoughourresultsareconsistentwiththathypothesis,thereisroomformoreextensiveworkinthefuture,suchasexperimentswithdierentsizesofneuralnetworksandmorecomplexstatisticalanalysis.

ThemaintestprobleminthisworkwastheproblemoflearningtheJfunctionofdynamicprogramming.

Foramazenavigationproblem,manyneuralnetworkresearchershavewrittenaboutneuralnetworkswhichlearnanoptimalpolicyofactionforoneparticularmaze[5].

ThispaperwilladdressthemoredicultproblemoftraininganeuralnetworktoinputapictureofamazeandoutputtheJfunctionforthismaze.

WhentheJfunctionisknown,itisatriviallocalcalculationtondthebestdirectionofmovement.

Thiskindofneuralnetworkshouldnotrequireretrainingwheneveranewmazeisencountered.

Insteaditshouldbeabletolookatthemazeandimmediately"see"theoptimalstrategy.

Trainingsuchanetworkisaverydicultproblemwhichhasneverbeensolvedinthepastwithanykindofneuralnetwork.

Alsoitistypicalofthechallengesoneencountersintrueintelligentcontrolandplanning.

Thispaperhasdemonstratedaworkingsolutiontothisproblemforthersttime.

Nowthatasystemisworkingonaverysimpleformforthisproblem,itwouldbepossibleinthefuturetoperformmanytestsoftheabilityofthissystemtogeneralizeitssuccesstomanymazes.

Inordertosolvethemazeproblem,itwasnotsucientonlytouseanSRN.

TherearemanychoicestomakewhenimplementingthegeneralideaofSRNsorMLPs.

Chapter5willdescribeindetailhowthesechoicesweremadeinthiswork.

Themostimportantchoiceswere:41.

BothfortheMLPandforthefeed-forwardcoreoftheSRNweusedthegeneralizedMLPdesign[2]whicheliminatestheneedtodecideonthenumberoflayers.

2.

Forthemazeproblem,weusedacellularorweight-sharingarchitecturewhichexploitsthespatialsymmetryoftheproblemandreducesdramaticallythenumberofweights.

Ineectwesolvedthemazeproblemusingonlyvedistinctneurons.

Thereareinterestingparallelsbetweenthisnetworkandthehippocampusofthehumanbrain.

3.

Forthemazeproblem,anadaptivelearningrate(ALR)procedurewasusedtopreventoscillationandensureconvergence.

4.

InitialvaluesfortheweightsandtheinitialinputvectorfortheSRNwerechosenessentiallyatrandom,byhand.

Inthefuture,moresystematicmethodsareavailable.

Butthiswassucientforsuccessinthiscase.

FinallyChapter6willdiscussthesimulationresultsinmoredetail,givetheconclusionsofthispaperandmentionsomepossibilitiesforfuturework.

2MotivationInthischapterwewillexplaintheimportanceofthiswork.

Asdiscussedabove,thepapershowshowtouseanewtypeofneuralnetworkinordertoachievebetterfunctionapproximationthanwhatisavailablefromthetypesofneu-ralnetworkswhicharepopulartoday.

Thischapterwilltrytoexplainwhybetterfunctionapproximationisimportanttoapproximatedynamicprogram-ming(ADP),intelligentcontrolandunderstandingthebrain.

ImageprocessingandotherapplicationshavealreadybeendiscussedintheIntroduction.

Thesethreetopics—ADP,intelligentcontrolandunderstandingthebrain—areallcloselyrelatedtoeachotherandprovidetheoriginalmotivationfortheworkofthispaper.

Thepurposeofthispaperistomakeacorecontributiontodevelopingthemostpowerfulpossiblesystemforintelligentcontrol.

Inordertobuildthebestintelligentcontrolsystems,weneedtocombinethemostsuitablemathematicstogetherwithsomeunderstandingofnaturalintelligenceinthebrain.

Thereisalotofinterestinintelligentcontrolintheworld.

Somecontrolsystemswhicharecalledintelligentareactuallyveryquickandeasythings.

Therearemanypeoplewhotrytomovestepbysteptoaddintelligenceintocontrol,butastep-by-stepapproachmaynotbeenoughbyitself.

Sometimestoachieveacomplexdicultgoal,itisnecessarytohaveaplan,thussomepartsoftheintelligentcontrolcommunityhavedevelopedamoresystematicvisionorplanforhowitcouldbepossibletoachieverealintelligentcontrol.

First,onemustthinkaboutthequestionofwhatisintelligentcontrol.

Then,insteadoftryingtoanswerthisquestioninonestep,wetrytodevelopaplantoreachthedesign.

Actuallytherearetwoquestions:51.

Howcouldwebuildanarticialsystemwhichreplicatesthemaincapa-bilitiesofbrain-likeintelligence,somehowuniedtogetherastheyareuniedtogetherinthebrain2.

Howcanweunderstandwhatarethecapabilitiesinthebrainandhowtheyareorganizedinafunctionalengineeringviewi.

e.

howarethosecircuitsinthehumanbrainarrangedtolearnhowtoperformdierenttasksItwouldbebesttounderstandhowthehumanbrainworksbeforebuildinganarticialsystem.

However,atthepresenttime,ourunderstandingofthebrainislimited.

Butatleastweknowthatlocalrecurrencyplayscriticalruleinthehigherpartofthehumanbrain[6][7][8][4].

AnotherreasontouseSRNsisthatSRNscanbeveryusefulinADPmath-ematically.

NowwewilldiscusswhatADPcandoforintelligentcontrolandunderstandingthebrain.

Theremainderofthischapterwilladdressthreequestionsinorder:1.

WhatisADP2.

WhatistheimportanceofADPtointelligentcontrolandunderstandingthebrain3.

WhatistheimportanceofSRNstoADP2.

1WhatisADPandJFunctionToexplainwhatisADP,letusconsidertheoriginalBellmanequation[9]:J(R(t))=maxu(t)(U(R(t),u(t))+)/(1+r)U0(1)whererandu0areconstantsthatareusedonlyininnite-time-horizonproblemsandthenonlysometimes,andwheretheanglebracketsrefertoexpectationvalue.

Inthispaperweactuallyuse:J(R(t))=maxu(t)(U(R(t),u(t))+)(2)sincethemazeproblemdonotinvolveaninnitetime-horizon.

InsteadofsolvingforthevalueofJineverypossiblestate,R(t),wecanuseafunctionapproximationmethodlikeneuralnetworkstoapproximatetheJfunction.

Thisiscalledapproximatedynamicprogramming(ADP).

ThispaperisnotdoingtrueADPbecauseintrueADPwedonotknowwhattheJfunctionisandmustthereforeuseindirectmethodstoapproximateit.

However,beforewetrytouseSRNsasacomponentofanADPsystem,itmakessensetorsttesttheabilityofanSRNtoapproximateaJfunction,inprinciple.

NowwewilltrytoexplainwhatistheintuitivemeaningoftheBellmanequation(Equation(1))andtheJfunctionaccordingtothetreatmenttakenfrom[2].

TounderstandADP,onemustrstreviewthebasicsofclassicaldynamicprogramming,especiallytheversionsdevelopedbyHoward[28]andBertsekas.

6Classicaldynamicprogrammingistheonlyexactandecientmethodtocom-putetheoptimalcontrolpolicyovertime,inageneralnonlinearstochasticenvi-ronment.

Theonlyreasontoapproximateitistoreducecomputationalcost,soastomakethemethodaordable(feasible)acrossawiderangeofapplications.

Indynamicprogramming,theusersuppliesautilityfunctionwhichmaytaketheformU(R(t),u(t))—wherethevectorRisaRepresentationorestimateofthestateoftheenvironment(i.

e.

thestatevector)—andastochasticmodeloftheplantorenvironment.

Then"dynamicprogramming"(i.

e.

solutionoftheBellmanequation)givesusbackasecondaryorstrategicutilityfunctionJ(R).

ThebasictheoremisthatmaximizingU(R(t),u(t))+J(R(t+1))yieldstheoptimalstrategy,thepolicywhichwillmaximizetheexpectedvalueofUaddedupoverallfuturetime.

Thusdynamicprogrammingconvertsadicultprob-leminoptimizingovermanytimeintervalsintoastraightforwardprobleminshort-termmaximization.

Inclassicaldynamicprogramming,wendtheexactfunctionJwhichexactlysolvestheBellmanequation.

InADP,welearnakindof"model"ofthefunctionJ;this"model"iscalleda"Critic.

"(Alternatively,somemethodslearnamodelofthederivativesofJwithrespecttothevariablesRi;thesecorrespondtoLagrangemultipliers,λi,andtothe"pricevariables"ofmicroeconomictheory.

SomemethodslearnafunctionrelatedtoJ,asintheAction-DependentAdaptiveCritic(ADAC)[29].

2.

2IntelligentControlandRobustControlTounderstandthehumanbrainscientically,wemusthavesomesuitablemath-ematicalconcepts.

Sincethehumanbrainmakesdecisionslikeacontrolsystem,itisanexampleofanintelligentcontrolsystem.

Neuroscientistsdonotyetun-derstandthegeneralabilityofthehumanbraintolearntoperformnewtasksandsolvenewproblemseventhoughtheyhavestudiedthebrainfordecades.

Somepeoplecomparethepastresearchinthiseldtowhatwouldhappenifwespentyearstostudyradioswithoutknowingthemathematicsofsignalprocessing.

Werstneedsomemathematicalideasofhowitispossibleforacomputingsystemtohavethiskindofcapabilitybasedondistributedparallelcomputation.

Thenwemustaskwhatarethemostimportantabilitiesofthehumanbrainwhichunifyallofitsmorespecicabilitiesinspecictasks.

Itwouldbeseenthatthemostimportantabilityofbrainistheabilitytolearnovertimehowtomakebetterdecisionsinordertobettermaximizethegoalsoftheorganism.

Thenaturalwaytoimitatethiscapabilityinengineeringsystemsistobuildsystemswhichlearnovertimehowtomakedecisionswhichmaximizesomemeasureofsuccessorutilityoverfuturetime.

Inthiscontext,dynamicprogrammingisimportantbecauseitistheonlyexactandecientmethodformaximizingutilityoverfuturetime.

Inthegeneralsituation,whererandomdisturbancesandnonlinearityareexpected,ADPisimportantbecauseitprovidesboththelearningcapabilityandthepossibilityofreducingcomputationalcosttoan7aordablelevel.

Forthisreason,ADPistheonlyapproachwehavetoimitatingthiskindofabilityofthebrain.

ThesimilaritybetweensomeADPdesignsandthecircuitryofthebrainhasbeendiscussedatlengthin[10]and[11].

Forexample,thereisanimportantstructureinthebraincalledthelimbicsystemwhichperformssomekindsofevaluationorreinforcementfunctions,verysimilartothefunctionsoftheneuralnetworksthatmustapproximatetheJfunctionofdynamicprogramming.

Thelargestpartofthelimbicsystem,calledthehippocampus,isknowntopossessahigherdegreeoflocalrecurrency[8].

Ingeneral,therearetwowaystomakeclassicalcontrollersstabledespitegreatuncertaintyaboutparametersoftheplanttobecontrolled.

Forexample,incontrollingahighspeedaircraft,thelocationofthecenterofthegravityisnotknown.

Thecenterofgravityisnotknownexactlybecauseitdependsonthecargooftheairplaneandthelocationofthepassengers.

Onewaytoaccountforsuchuncertaintiesistouseadaptivecontrolmethods.

Wecangetsimilarresults,butmoreassuranceofstabilityinmostcases[16]byusingrelatedneuralnetworkmethods,suchasadaptivecriticswithrecurrentnetworks.

Itislikeadaptivecontrolbutmoregeneral.

ThereisanotherapproachcalledrobustcontrolorH∞control,whichtrystodesignaxedcontrollerwhichremainsstableoveralargerangeinparameterspace.

BarasandPatel[31]haveforthersttimesolvedthegeneralproblemofH∞controlforgeneralpartiallyobservednonlinearplants.

Theyhaveshownthatthisproblemreducestoaprobleminnonlinear,stochasticoptimization.

Adaptivedynamicprogrammingmakesitpossibletosolvelargescaleproblemsofthistype.

2.

3ImportanceoftheSRNtoADPADPsystemsalreadyexistwhichperformrelativelysimplecontroltaskslikestabilizinganaircraftasitlandsunderwindyconditions[12].

Howeverthiskindoftaskdoesnotreallyrepresentthehighestlevelofintelligenceorplanning.

Trueintelligentcontrolrequirestheabilitytomakedecisionswhenfuturetimeperiodswillfollowacomplicated,unknownpathstartingfromtheinitialstate.

Oneexampleofachallengeforintelligentcontrolistheproblemofnavigatingamazewhichwewilldiscussinchapter4.

Atrueintelligentcontrolsystemshouldbeabletolearnthiskindoftask.

However,theADPsystemsinusetodaycouldneverlearnthiskindoftask.

TheyuseconventionalneuralnetworkstoapproximatetheJfunction.

BecausetheconventionalMLPcannotapproximatesuchaJfunction,wemaydeducethatADPsystemconstructedonlyfromMLPswillneverbeabletodisplaythiskindofintelligentcontrol.

Therefore,itisessentialthatwecanndakindofneuralnetworkwhichcanperformthiskindoftask.

Aswewillshow,theSRNcanllthiscrucialgap.

ThereareadditionalreasonsforbelievingthattheSRNmaybecrucialtointelligentcontrolasdiscussedinchapter13of[9].

83AlternativeFormsofRecurrentNetworks3.

1RecurrentNetworksinGeneralThereisahugeliteratureonrecurrentnetworks.

Biologistshaveusedmanyrecurrentmodelsbecausetheexistenceofrecurrencyinthebrainisobvious.

However,mostoftherecurrentnetworksimplementedsofarhavebeenclas-sicstylerecurrentnetworks,asshownonthelefthandofFigure2.

Mostofthesenetworksareformulatedfromordinarydierentialequation(ODE)sys-tems.

UsuallytheirlearningisbasedonarestrictedconceptofHebbianlearn-ing.

Originallyintheneuralnetworkeld,themostpopularneuralnetworkswererecurrentnetworkslikethosewhichHopeld[14]andGrossberg[15]usedtoprovideassociativememory.

FEATUREEXTRACTIONART,SOM,.

.

.

MINIMIZATIONHOPFIELD,CAUCHYCLASSICALRECURRENTNETWORKSRECURRENTNETWORKSTLRNSRN(DynamicSystems,Prediction)(Betterfunctionapproximation)ASSOCIATIVEMEMORYSTATICFUNCTIONCLUSTERINGHOPFIELD,HASSOUNFigure2:RecurrentnetworksAssociativememorynetworkscanactuallybeappliedtosupervisedlearning.

Butinactualitytheircapabilitiesareverysimilartothoseoflook-uptablesandradialbasisfunctions.

Theymakepredictionsbasedonsimilaritytoprevious9examplesorprototypes.

Theydonotreallytrytoestimategeneralfunctionalrelationships.

Asaresultthesemethodshavebecomeunpopularinpracticalapplicationsofsupervisedlearning.

ThetheoremsofBarrondiscussedintheIntroductionshowthatMLPsdoprovidebetterfunctionapproximationthandosimplemethodsbasedonsimilarity.

Therehasbeensubstantialprogressinthepastfewyearsindevelopingnewassociativememorydesigns.

Nevertheless,theMLPisstillbetterforthespecictaskoffunctionapproximationwhichisthefocusofthispaper.

Inasimilarway,classicrecurrentnetworkshavebeenusedfortaskslikeclustering,featureextractionandstaticfunctionoptimization.

Butthesearedierentproblemsfromwhatwearetryingtosolvehere.

Actuallytheproblemofstaticoptimizationwillbeconsideredinfuturestagesofthisresearch.

WehopethattheSRNcanbeusefulinsuchappli-cationsafterwehaveuseditforsupervisedlearning.

WhenpeopleusetheclassicHopeldnetworksforstaticoptimization,theyspecifyalltheweightsandconnectionsinadvance[14].

Thishaslimitedthesuccessofthiskindofnetworkforlargescaleproblemswhereitisdiculttoguesstheweights.

WiththeSRNwehavemethodstotraintheweightsinthatkindofstructure.

Thustheguessingisnolongerneeded.

However,touseSRNsinthatapplicationrequiresrenementbeyondthescopeofthispaper.

TherehavealsobeenresearchersusingODEneuralnetworkswhohavetriedtousetrainingschemesbasedonaminimizationoferrorinsteadofHebbianapproaches.

However,inpracticalapplicationsofsuchnetworks,itisimportanttoconsidertheclockratesofcomputationanddatasampling.

Forthatreason,itisbotheasierandbettertouseerrorminimizingdesignsbasedondiscretetimeratherthanODE.

3.

2StructureofDiscrete-TimeRecurrentNetworksIftheimportanceofneuralnetworksismeasuredbythenumberofwordspub-lished,thentheclassicnetworksdominatetheeldofrecurrentnetworks.

How-ever,ifthevalueismeasuredbasedoneconomicvalueofpracticalapplication,thentheeldisdominatedbytime-laggedrecurrentnetworks(TLRNs).

ThepurposeoftheTLRNistopredictorclassifytime-varyingsystemsusingre-currencyasawaytoprovidememoryofthepast.

TheSRNhassomerelationwiththeTLRNbutitisdesignedtoperformafundamentallydierenttask.

TheSRNusesrecurrencytorepresentmorecomplexrelationshipsbetweenoneinputvectorX(t)andoneoutputY(t)withoutconsiderationoftheothertimest.

Figure3andFigure4showusmoredetailsabouttheTLRNandtheSRN.

Incontrolapplications,u(t)representsthecontrolvariableswhichweusetocontroltheplant.

Forexample,ifwedesignacontrollerforacarengine,theX(t)variablesarethedatawegetfromoursensors.

Theu(t)variableswouldincludethevalvesettingswhichweusetotrytocontroltheprocessofcombustion.

TheR(t)variablesprovideawayfortheneuralnetworkstorememberpasttime10Z-1X(t)R(t)X(t+1)u(t)TLRNFigure3:Timelaggedrecurrentnetwork(TLRN)XfyFigure4:Simultaneousrecurrentnetwork(SRN)11cycles,andtoimplicitlyestimateimportantvariableswhichcannotbeobserveddirectly.

Infact,theapplicationofTLRNstoautomobilecontrolisthemostvaluableapplicationofrecurrentnetworkseverdevelopedsofar.

Asimultaneousrecurrentnetwork(Figure4)isdenedasamapping:Y(t)=F(X(t),W)(3)whichiscomputedbyiteratingoverthefollowingequation:y(n+1)(t)=f(y(n)(t),X(t),W)(4)wherefissomesortoffeed-forwardnetworkorsystem,andYisdenedas:Y(t)=limn→∞y(n)(t)(5)WhenweuseYinthispaper,weusen=20insteadof∞here.

InFigure4,theoutputsoftheneuralnetworkcomebackagainasinputstothesamenetwork.

However,inconceptthereisnotimedelay.

Theinputsandoutputsshouldbesimultaneous.

Thatiswhyitiscalledasimultaneousrecurrentnetwork(SRN).

Inpractice,ofcourse,therewillalwaysbesomephys-icaltimedelaybetweentheoutputsandtheinputs.

HoweveriftheSRNisimplementedinfastcomputers,thistimedelaymaybeverysmallcomparedtothedelaybetweendierentframesofinputdata.

InFigure4,Xreferstotheinputdataatthecurrenttimeframet.

Thevectoryrepresentsthetemporaryoutputofthenetwork,whichisthenrecycledasanadditionalsetofinputstothenetwork.

AtthecenteroftheSRNisactuallythefeed-forwardnetworkwhichimplementsthefunctionf.

(IndesigninganSRN,youcanchooseanyfeed-forwardnetworkorsystemasyoulike.

Thefunctionfsimplydescribeswhichnetworkyouuse).

TheoutputoftheSRNatanytimetissimplythelimitofthetemporaryoutputy.

InEquation(3)and(4),noticethattherearetwointegers—nandt—whichcouldbothrepresentsomekindoftime.

Theintegertrepresentsaslowerkindoftimecycle,likethedelaybetweenframesofincomingdata.

Theintegernrepresentsafasterkindoftime,likethecomputingcycleofafastelectronicchip.

Forexample,ifwebuildacomputertoanalyzeimagescomingfromamoviecamera,"t"and"t+1"representtwosuccessiveincomingpictureswithamoviecamera.

Thereareusuallyonly32framespersecond.

(Inthehumanbrain,itseemsthatthereareonlyabout10framespersecondcomingintotheneocortex.

)Butifweuseafastneuralnetworkchip,thecomputationalcycle—thetimebetween"n"and"n+1"—couldbeassmallasamicrosecond.

Inactuality,itisnotnecessarytochoosebetweentime-laggedrecurrency(fromttot+1)andsimultaneousrecurrency(fromnton+1).

Itispossibletobuildahybridsystemwhichcontainsbothtypesofrecurrency.

Thiscouldbeveryusefulinanalyzingdatalikemoviepictures,whereweneedbothmemoryandsomeabilitytosegmenttheimages.

[9]discusseshowtobuildsuchahybrid.

12However,beforebuildingsuchahybrid,wemustrstlearntomakeSRNsworkbythemselves.

Finally,pleasenotethattheTLRNisnottheonlykindofneuralnetworkusedinpredictingdynamicalsystems.

Evenmorepopularisthetimedelayedneuralnetwork(TDNN),showninFigure5.

TheTDNNispopularbecauseitiseasytouse.

However,ithaslesscapability,inprinciple,becauseithasnoabilitytoestimateunknownvariables.

Itisespeciallyweakwhensomeofthesevariableschangeslowlyovertimeandrequirememorywhichpersistsoverlongtimeperiods.

Inaddition,theTLRNtstherequirementsofADPdirectly,whiletheTDNNdoesnot[9][16].

X(t)X(t-1)X(t-k)TDNNX(t+1)u(t-k)u(t-1)u(t)Figure5:Timedelayedneuralnetwork(TDNN)3.

3TrainingofSRNsandTLRNsTherearemanytypesoftrainingthathavebeenusedforrecurrentnetworks.

Dierenttypesoftraininggiverisetodierentkindsofcapabilitiesfordierenttasks.

ForthetaskswhichwehavedescribedfortheSRNandtheTLRN,theproperformsoftrainingallinvolvesomecalculationofthederivativesoferrorwithrespectstotheweights.

Usuallyafterthesederivativesareknown,theweightsareadaptedaccordingtoasimpleformulaasfollows:newWi,j=oldWi,jLRErrorWi,j(6)whereLRiscalledthelearningrate.

TherearevemainwaystotrainSRNs,allbasedondierentmethodsforcalculatingorapproximatingthederivatives.

FourofthesemethodscanalsobeusedwithTLRNs.

Somecanbeusedforcontrolapplications.

Butthedetailsofthoseapplicationsarebeyondthescopeofthispaper.

Theseve13typesoftrainingarelistedinFigure6.

Forthispaper,wehaveusedtwoofthesemethods:Backpropagationthroughtime(BTT)andTruncation.

TypesofSRNSimultaneousBackpropagationBackpropagationThroughTimeForwardPropagationTrainingTruncationErrorCriticsFigure6:TypesofSRNTrainingThevemethodsare:1.

Backpropagationthroughtime(BTT).

Thismethodandforwardpropa-gationarethetwomethodswhichcalculatethederivativesexactly.

BTTisalsolessexpensivethanforwardpropagation.

2.

Truncation.

Thisisthesimplestandleastexpensivemethod.

Itusesonlyonesimplepassofbackpropagationthroughthelastiterationofthemodel.

TruncationisprobablythemostpopularmethodusedtoadaptSRNseventhoughthepeoplewhouseitmostlyjustcallitordinarybackpropagation.

3.

Simultaneousbackpropagation.

Thisismorecomplexthantruncation,butitstillcanbeusedinrealtimelearning.

Itcalculatesderivativeswhichareexactintheneighborhoodofequilibriumbutitdoesnotaccountforthedetailsofthenetworkbeforeitreachestheneighborhoodofequilibrium.

4.

Errorcritics(showninFigure7).

ThisprovidesageneralapproximationtoBTTwhichissuitableforuseinreal-timelearning[9].

14TLRNTLRNCriticErrorCriticErrorErrorErrorR(t)X(t)u(t)X(t)u(t)X(t+1)X(t+1)X(t=1)u(t+1)R(t+1)λ^(t)λ^λ(t)(t+1)Figure7:ErrorCritics155.

Forwardpropagation.

This,likeBTT,calculatesexactderivatives.

Itisoftenconsideredsuitableforreal-timelearningbecausethecalculationsgoforwardintime.

However,whentherearenneuronsandmconnections,thecostofthismethodperunitoftimeisproportionaltonm.

Becauseofthishighcost,forwardpropagationisnotreallybrain-likeanymorethanBTT.

3.

3.

1Backpropagationthroughtime(BTT)BTTisageneralmethodforcalculatingallthederivativeofanyoutcomeorresultofaprocesswhichinvolvesrepeatedcallstothesamenetworkornet-worksusedtohelpcalculatesomekindofnaloutcomevariableorresultE.

Insomeapplications,Ecouldrepresentutility,performance,costorothersuchvariables.

Butinthispaper,Ewillbeusedtorepresenterror.

BTTwasrstproposedandimplementedin[17].

ThegeneralformofBTTisasfollows:fork=1toTdoforwardcalculation(k);calculateresultE;calculatedirectderivativesofEwithrespecttooutputsofforwardcalculations;fork=Tto1backpropagatethroughforwardscalculation(k),calculatingrun-ningtotalswhereappropriate.

ThesestepsareillustratedinFigure8.

Noticethatthisalgorithmcanbeappliedtoallkindsofcalculations.

ThuswecanapplyittocaseswherekrepresentsdataframestasintheTLRNs,ortocaseswherekrepresentsinternaliterationsnasintheSRNs.

AlsonotethateachboxofcalculationreceivesinputfromsomedashedlineswhichrepresentthederivativesofEwithrespecttotheoutputofthebox.

Inordertocalculatethederivativescomingoutofeachcalculationbox,onesimplyusesbackpropagationthroughthecalculationofthatboxstartingoutfromtheincomingderivatives.

WewillexplaininmoredetailhowthisworksintheSRNcaseandtheTLRNcase.

SofarasweknowBTThasbeenappliedinpublishedworkingsystemsforTLRNsandforcontrol,butnotyetforSRNsuntilnow.

However,Rumelhart,HintonandWilliams[18]didsuggestthatsomeoneshouldtrythis.

TheapplicationofBTTforTLRNsisdescribedatlengthin[2]and[9].

TheprocedureisillustratedinFigure9.

Inthisexamplethetotalerrorisactuallythesumoferrorovereachtimetwheretgoesfrom1toT.

ThereforetheoutputsoftheTLRNateachtimet(t#include#include#includevoidF_NET2(doubleF_Yhat,doubleW[30][30],doublex[30],intn,intm,intN,doubleF_W[30][30],doubleF_net[30],doubleF_Ws[30],doubleWs,doubleF_x[30]);voidNET(doubleW[30][30],doublex[30],doubleww[30],intn,intm,intN,doubleYhat[30]);41voidsynthesis(intB[30][30],intA[30][30],intn1,intn2);voidpweight(doubleWs,doubleF_Ws_T,doubleww[30],doubleF_net_T[30],doubleW[30][30],doubleF_W_T[30][30],intn,intN,intm);intminimum(ints,intt,intu,intv);intmin(intk,intl);doublef(doublex);voidmain(){inti,j,it,iz,ix,iy,lt,m,maxt,n,n1,n2,nn,nm,N,p,q,po,t,TT;intA[30][30],B[30][30];doublea,b,dot,e,e1,e2,es,mu,s,sum,F_Ws_T,Ws,F_Yhat,wi;doubleW[30][30],x[30],F_net_T[30],F_Ws[30],F_W_O[30][30],F_W[30][30];doubleF_W_T[30][30],F_net[30],ww[30],yy[21][12][8][8];doubleYhat[30],F_y[21][12][8][8],F_x[30],F_Jhat[30][30];doubleS_F_W1,S_F_W2,Lr_W,S_F_net1,S_F_net2,Lr_ww,Lr_Ws,F_Ws_O;doubley[50][50],F_net_O[50],F_Ws1,F_Ws2,W_O[50][50],ww_O[50],Ws_O;FILE*f;/*Numberofinputs,neuronsandoutput:7,3,1*//*'n'isthenumberoftheactiveneurons*//*'m'and'N'botharethenumberofinputs*//*'nm'isthenumberofmemoryis:5*//*'nn+1'*'nn+1'isthesizeofthemaze'*//*'TT'isthenumberoftrials*//*'lt'isthenumberoftheintervaltime*//*'maxt'isthemaxnumberforTinfigure[8]*//*Lr-Ws,Lr_wwandLr_WarethelearningratesforWs,wwandW*/a=0.

9;b=0.

2;n=5;m=11;N=11;nn=6;nm=5;TT=30000;lt=50;maxt=20;wi=25;Ws=40;e=0;po=pow(2,31)-1;/*InitialvaluesofOld*/F_Ws_O=1;for(i=m+1;i-1;q--){for(ix=0;ixS_F_W2)||(S_F_W1==S_F_W2))Lr_W=Lr_W*(a+b);elseif(S_F_W1S_F_net2)||(S_F_net1==S_F_net2))Lr_ww=Lr_ww*(a+b);elseif(S_F_net1F_Ws2)||(F_Ws1==F_Ws2))Lr_Ws=Lr_Ws*(a+b);elseif(F_Ws1m;i--){for(j=i+1;j0;i--)for(j=m+1;jl)r=l;elser=k;returnr;}voidpweight(doubleWs,doubleF_Ws_T,doubleww[30],doubleF_net_T[30],doubleW[30][30],doubleF_W_T[30][30],intn,intN,intm){inti,j;for(i=m+1;i

- arXiv:adap-org/9806001v1相关文档

- 优先级exhentai.org

- stratexhentai.org

- Componentexhentai.org

- elleexhentai.org

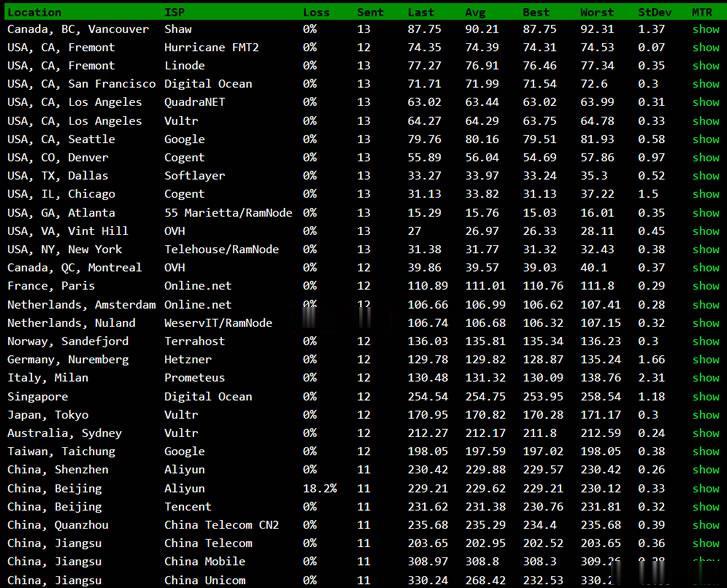

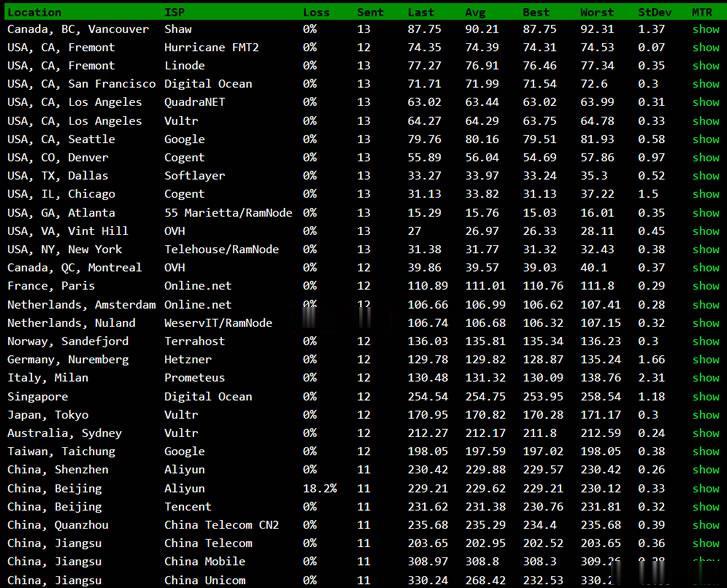

BuyVM新设立的迈阿密机房速度怎么样?简单的测评速度性能

BuyVM商家算是一家比较老牌的海外主机商,公司设立在加拿大,曾经是低价便宜VPS主机的代表,目前为止有提供纽约、拉斯维加斯、卢森堡机房,以及新增加的美国迈阿密机房。如果我们有需要选择BuyVM商家的机器需要注意的是注册信息的时候一定要规范,否则很容易出现欺诈订单,甚至你开通后都有可能被禁止账户,也是这个原因,曾经被很多人吐槽的。这里我们简单的对于BuyVM商家新增加的迈阿密机房进行简单的测评。如...

Virtono:圣何塞VPS七五折月付2.2欧元起,免费双倍内存

Virtono是一家成立于2014年的国外VPS主机商,提供VPS和服务器租用等产品,商家支持PayPal、信用卡、支付宝等国内外付款方式,可选数据中心共7个:罗马尼亚2个,美国3个(圣何塞、达拉斯、迈阿密),英国和德国各1个。目前,商家针对美国圣何塞机房VPS提供75折优惠码,同时,下单后在LET回复订单号还能获得双倍内存的升级。下面以圣何塞为例,分享几款VPS主机配置信息。Cloud VPSC...

IMIDC日本多IP服务器$88/月起,E3-123x/16GB/512G SSD/30M带宽

IMIDC是一家香港本土运营商,商家名为彩虹数据(Rainbow Cloud),全线产品自营,自有IP网络资源等,提供的产品包括VPS主机、独立服务器、站群独立服务器等,数据中心区域包括香港、日本、台湾、美国和南非等地机房,CN2网络直连到中国大陆。目前主机商针对日本独立服务器做促销活动,而且提供/28 IPv4,国内直连带宽优惠后每月仅88美元起。JP Multiple IP Customize...

exhentai.org为你推荐

-

金评媒朱江喜剧明星“朱江”的父亲叫什么?硬盘工作原理硬盘的读写原理www.522av.com现在怎样在手机上看AV336.com求一个游戏的网站 你懂得www.585ccc.com手机ccc认证查询,求网址ww.66bobo.com有的网址直接输入***.com就行了,不用WWW, 为什么?机器蜘蛛有谁知道猎人的机械蜘蛛在哪捉的www.99vv1.comwww.in9.com是什么网站啊?4399宠物连连看2.5我怎么找不到QQ里面的宠物连连看呢猴山条约游猴山,观猴子